Evinact Senior Manager Mainaaz Oakley and Director Jane Brimacombe explain why vendor due diligence is one of the most consequential, and most overlooked, privacy controls available to organisations adopting AI.

Most organisations adopting AI are focused on what the technology can do. Fewer have seriously examined the privacy risk they’ve inherited through the vendors that make the technology possible.As AI adoption accelerates and privacy obligations tighten, that gap is becoming harder to ignore.

The technology choices organisations make, including what they buy and who they buy it from, have consequences that extend well beyond the initial procurement decision.

Vendor due diligence is a core privacy control because it directly shapes how privacy obligations are met in practice. Procurement decisions determine what data is collected, how it flows, who can access it, how long it is retained, and how quickly issues can be identified and resolved.

When you buy capability, you inherit privacy risk, and often in ways that aren’t immediately visible.

The OAIC guidance on commercially available AI products reinforces what many organisations are already experiencing: AI adoption can amplify privacy risk across inputs, outputs, access, retention and secondary use.

Critically, privacy obligations can apply to both the personal information you put into an AI system, and the personal information generated by the system, including inferred, derived, or incorrect information about an identifiable individual.

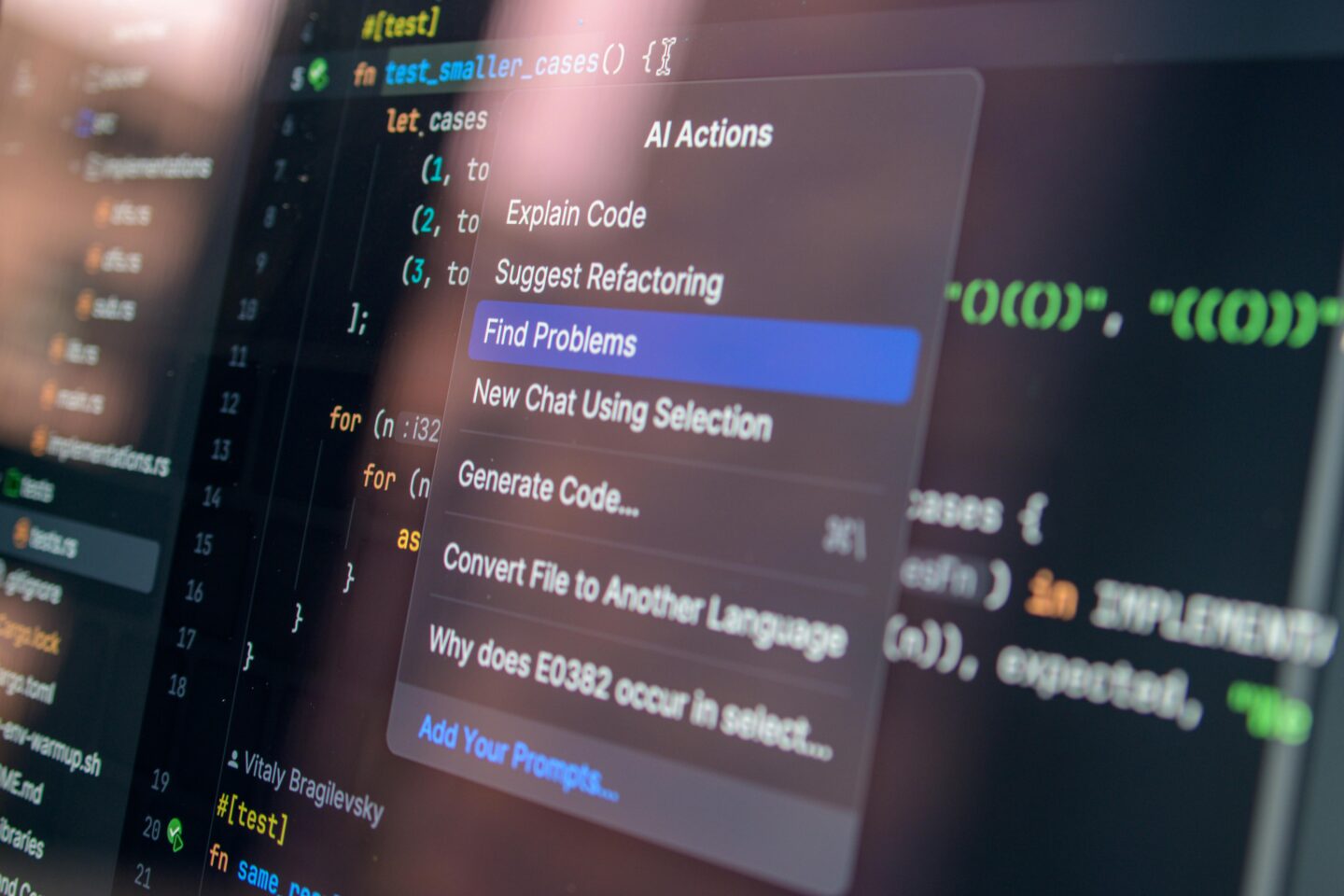

This shifts the due diligence question from a basic assurance check – does the vendor have a security certificate? – to a more defensible and outcome-focused question: Is this solution suitable for our intended use, and can we evidence that decision under privacy regulator scrutiny?

Five practical controls to mitigate privacy risk in AI procurement

Effective vendor due diligence moves beyond documentation review to actively reducing privacy risk. These five controls provide a practical foundation, and a more defensible basis for decisions that may later face regulatory scrutiny.

Start with the use case. Assess whether the solution is suitable for your specific context, including what personal information will be involved and why. This grounds the procurement decision in necessity and proportionality, the baseline test for lawful data use, before any contract is signed.

Design human oversight deliberately. Where human review is expected, define clearly where it applies, how it operates in practice, and what evidence will be retained. A key distinction is often overlooked: a human can provide oversight is not the same as a human will provide effective oversight.

Treat transparency as a requirement. Require clear, usable information about how personal information is handled: what is collected, what is generated, what is retained, where it is stored, and who can access it, including subcontractors. Without this, organisations cannot meet baseline privacy obligations, let alone demonstrate them to a regulator.

Contract for boundaries. Define permitted purposes explicitly and prohibit unauthorised secondary use of personal information. When contracts are silent on secondary use, they leave vendors room to repurpose data in ways that conflict with both legislative obligations and stakeholder expectations.

Build in accountability. Establish contractual obligations for incident notification, investigation support and access to audit logs. When a breach or complaint occurs, the organisations best placed to respond are those that built the right obligations into their contracts before they needed them.

Ongoing governance matters as much as selection

A common mistake is treating vendor due diligence as a one-off procurement activity. In practice, third-party privacy risks evolve.

Vendors introduce new features, add sub-processors, or change data handling practices, often in ways that weren’t part of the original assessment. If governance ends at contract execution, those changes go unnoticed until they become problems.

Consider what this looks like in practice. A vendor quietly adds a sub-processor in a new jurisdiction. An AI feature is updated to retain user inputs for model training. A change in ownership shifts the data handling practices your original assessment relied on. None of these require a vendor to act in bad faith, but all of them can create material privacy exposure for the organisations that did not see them coming.

Effective organisations manage privacy risk across the full vendor lifecycle: evaluation, risk assessment, contracting, onboarding, ongoing monitoring, periodic assurance, and off-boarding. Each stage is an opportunity to identify issues, maintain clear evidence, and reduce the kind of surprise risk that surfaces when a vendor change is discovered only after a complaint or incident.

In practical terms, this means clear ownership of the vendor relationship beyond the procurement team, regular review triggers built into contracts, and documentation maintained to a standard that would withstand regulatory scrutiny.

At Evinact, this is increasingly where we’re working with organisations; helping teams move from isolated procurement controls to governance that holds up across the full lifecycle of a vendor relationship.

A final word on public GenAI tools

The OAIC’s position is clear: don’t enter personal information, particularly sensitive information, into publicly available generative AI tools. The privacy risks, in their words, are “significant and complex.”

That guidance is easy to follow in theory and easy to forget under pressure. In high-pressure delivery cycles, informal workarounds emerge and people reach for the fastest available tool.

If the only thing standing between your organisation and a privacy breach is someone remembering not to paste personal information into ChatGPT, you don’t have a control environment. You have an honour system.

Organisations should instead implement clearer guardrails, targeted training, and safer, approved alternatives before that pressure arrives, not in response to it.

Trust is built through defensible decisions

Leaders don’t earn trust by promising perfect technology. They earn it by making privacy and risk decisions that withstand scrutiny.

In practice, that means treating procurement and contract management as core privacy controls; not as perfunctory administrative steps before the real work begins. Contracts are where expectations become enforceable, and where organisations create the conditions to respond effectively when things go wrong.

That discipline is what separates organisations that manage privacy risk from those that inherit it.